This is an introductory article from my series of articles (a mini-course) on ‘Monitoring at FAANG scale’.

Intro

Monitoring is the practice of collecting and analyzing system metrics, logs, and events so you can spot issues early, understand performance, and get alerted when things go wrong.

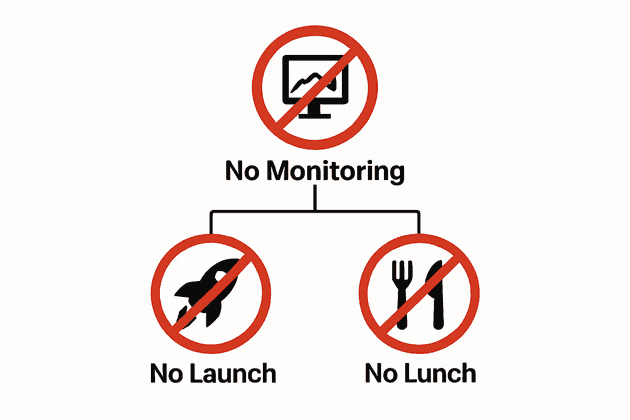

When there is no monitoring, there is no launch… also, there is no lunch 🥘😁. Ship a service without monitoring (or with bad monitoring), and while everyone else enjoys their lunch, you’ll be firefighting production issues.

Why is monitoring a must have?

At FAANG scale, we always treat monitoring as part of the project: your system design is not complete unless you explicitly define how are you going to monitor each component. A service isn’t complete when a code is merged and feature flag is flipped. All known failure cases must have continuous monitoring set up to get the required transparency on: 1) what is working, 2) what is not working and 3) what is going to stop working — proactively.

Good monitoring protects your customers by catching issues before they do, protects the engineering team by giving them confidence, and protects your business by safeguarding service availability, SLAs *, and customer trust.

* SLA (Service Level Agreement) is a formal contract between a service provider and a customer that clearly defines the expected level of service, performance standards, and responsibilities for both parties.

As an example, say you deployed a new payment service without monitoring. If payments start failing silently, you won’t know until your customers get frustrated and contact support or payment rate suddenly drops; by then it’s already late. So monitoring is a core pillar of every serious software business — without it, customer trust is impossible to maintain.

When is your monitoring ‘good enough’ to launch?

I love the concept of ‘good enough’ software as described in the book The Pragmatic Programmer, which I also apply when I think about monitoring my service:

‘Good enough’ software is software that meets the necessary quality standards and user needs within its context, achieved through pragmatic trade-offs rather than chasing impossible perfection.

Similarly, monitoring is not about creating fancy-looking dashboards that you want to show off in a Sprint Demo with hundreds of metrics that nobody looks at; it is rather identifying the failure cases that would impact your customers, and making your system transparent enough to signal you when they happen, when they are about to happen and taking the clear action items to prevent them.

Bare minimum checklist

This is a bare minimum general checklist you need to tick before launch:

- ✅ Basic service metrics covered with metrics and alarms: latency, errors, traffic volume, per-resource quota usage, etc.

- ✅ Each alarm is linked to a specific run-book (or SOP *): so oncalls know path to mitigation.

- ✅ Logs are structured and machine readable: capturing enough context on a failure.

- ✅ Dashboards are created for aggregate view and ad-hoc reviews.

- ✅ Ownership is clearly defined: which team owns what component.

* SOP (Standard Operating Procedure) is a step-by-step instructions compiled by an engineering team to formalize the resolution of a routine operation: like mitigating a particular failure in a software system, or resolving a specific customer request to achieve consistent quality and performance irrespective of an operator.

Embedding monitoring in the development lifecycle

To ensure it is not missed, monitoring should be treated as a first-class citizen throughout the software development lifecycle.

Design documents

Requirements for monitoring should belong in design documents, just like functional and non-functional requirements. One way to enforce this is to have a team-wide or org-wide design document template that includes monitoring that possibly also mentions team-wide monitoring best-practices to take into consideration.

Code reviews

Once the design phase of a system is complete, it is important to maintain the monitoring excellence continuously via high quality code reviews. Similarly, a code review description can include a checklist for the author to validate if their change meets the bar 1) in structured logging, 2) update in alarm thresholds as necessary to the change in functionality, 3) extension in the SOPs when a new alarm is added as a code change, and others.

Make it ‘just work’ and ‘for free’

I remember in one of the dashboard reviews I participated, a senior engineer had a great feedback due to a miss in monitoring:

Let’s make it such that each system we create get basic monitoring (like heap and disk usage alarming) for free; and that engineers need to actually do work to remove that, which they will not do…

Takeaway is that you should strive to minimize the development cost of monitoring, and make it as difficult as possible to forget about. For example, you can achieve that by creating reusable libraries and components that you can use in your backend development framework or in IaC (Infrastructure as Code). For example, if you use the AWS CDK, and want to create an AWS Lambda function, you can create a custom-reusable MonitoredLambdaFunction CDK construct that wraps the raw Lambda function, and creates the alarms on latency, duration and errors. All what engineers need to do would be to import it from the team library, and monitoring ‘just works’ for them, ‘for free’.

Conclusion

To wrap up: monitoring isn’t optional infrastructure — it’s a core feature of any software service, essential for reliability and customer trust. Given its importance, the next step in our Monitoring at FAANG scale series will be to dive deeper into its different aspects and explore what makes for good monitoring.

See you in the next articles as I dive deep into Monitoring at FAANG scale!